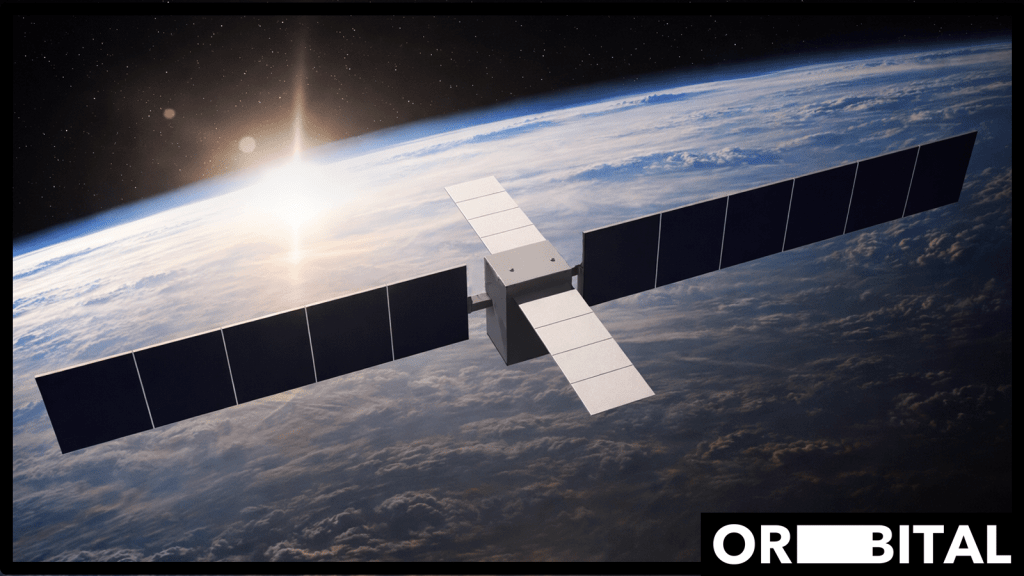

Via EdgeIR.comOrbital is developing AI data centers in low earth orbit to address energy and cooling constraints limiting terrestrial AI infrastructure.

The data centers will operate on solar power and use radiative cooling, taking advantage of space’s constant energy availability and the efficiency of cooling in a vacuum.

“AI progress is being constrained by the grid,” says Euwyn Poon, CEO and founder of Orbital. “Data center economics are dominated by electricity and cooling, and both are getting harder. In orbit, solar power is continuous and cooling is fundamentally different. Orbital is building compute infrastructure that removes the energy ceiling and scales with AI’s potential.”

In April 2027 Orbital’s first test mission, Orbital-1, is expected to launch on a SpaceX Falcon 9 to demonstrate GPU operation in orbit and AI inference workloads.

It is being funded by a16z Speedrun, created to back the most ambitious and innovative projects.

NVIDIA-powered servers on Orbital’s satellites are made for running AI inference workloads across a constellation of nodes.

The FCC filing marks the next step in a company that is preparing to deploy a constellation of satellites for orbital AI compute infrastructure.

The program is designed to remove the energy constraint on AI advancement through development of scalable, space-based compute infrastructure.

Orbital represents a highly speculative but conceptually coherent extension of the “beyond-the-grid” trend already emerging on earth (e.g., behind-the-meter and off-grid AI factories). If execution risks can be overcome, orbital compute could redefine the upper bound of AI infrastructure scaling but in the near term, it should be viewed less as a replacement for terrestrial infrastructure and more as an experimental frontier pushing the limits of energy-abundant, unconstrained AI compute.

http://dlvr.it/TSGC70

Leave a comment