Via EdgeIR.comLambda recently announced it’s becoming a launch partner for the NVIDIA Vera CPU platform and NVIDIA STX.

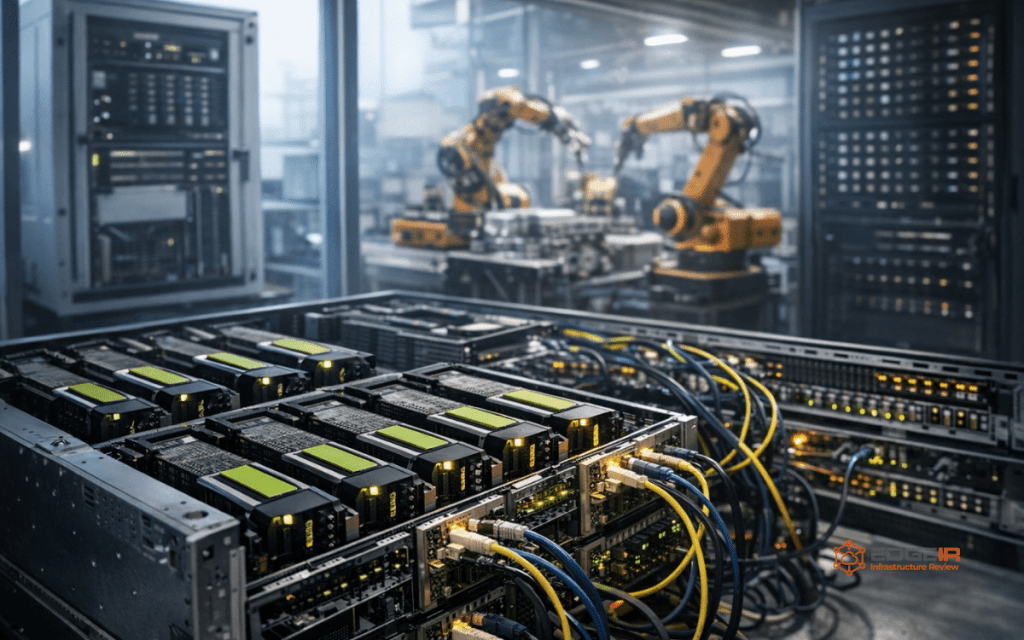

The GPU-native AI infrastructure provider will deploy NVIDIA Quantum-X800 infiniBand photonics co-packaged optics in an AI factory with 10,000+ NVIDIA Blackwell Ultra GPUs.

Lambda’s bare metal instances made its way out of the lab and into the core cloud offering, giving users direct access to hardware while avoiding virtualization overhead for distributed AI training workloads.

Designed for launching thousands of parallel AI environments, the NVIDIA Vera CPU platform enables maximally high memory bandwidth which optimizes reinforcement learning and agentic AI workloads.

The NVIDIA STX is a modular architecture for AI storage that augments inference, analytics, and training with next-gen hardware optimized KV-cache management.

Co-Packaged Optics (CPO) networking enables faster, cost-efficient AI infrastructure suitable for large-scale AI factories, alleviating major efficiency bottlenecks found in current approaches.

“The race to build AI factories isn’t won on GPU counts alone,” says Dave Salvator, director of accelerated computing at NVIDIA. “Network architecture is what determines whether those systems can perform at scale. Getting this right is what allows AI infrastructure to power services used by hundreds of millions of people around the world.“

Lambda oversees one of the largest deployments of NVIDIA Quantum-X800 CPO switches, highlighting how critical network architecture is when scaling AI systems.

These announcements further bolster Lambda’s AI infrastructure platform, which empowers frontier labs, enterprises, and hyperscalers with proven and energy-efficient workhorses built for reliability at scale.

Lambda continues its mission to make AI compute ubiquitous, leveraging a decade-long collaboration with NVIDIA to advance its Superintelligence Cloud platform.

http://dlvr.it/TRysYk

Leave a comment